Tuesday, November 29

Smart Dust

What Is A Smart Dust?

Berkeley’s Smart Dust project, led by Professors

Pister and Kahn, explores the limits on size and power consumption in

autonomous sensor nodes. Size reduction is paramount, to make the nodes as

inexpensive and easy-to-deploy as possible. The research team is confident that

they can incorporate the requisite sensing, communication, and computing

hardware, along with a power supply, in a volume no more than a few cubic

millimeters, while still achieving impressive performance in terms of sensor

functionality and communications capability. These millimeter-scale nodes are

called “Smart Dust.” It is certainly within the realm of possibility that

future prototypes of Smart Dust could be small enough to remain suspended in

air, buoyed by air currents, sensing and communicating for hours or days on

end.

Smart Dust Technology

Integrated into a single package are:-

1.

MEMS sensors

2.

MEMS beam steering mirror for active optical transmission

3. MEMS corner cube retroreflector for passive

optical transmission

4.An

optical receiver

5.

Signal processing and control circuitory

6. A

power source based on thick film batteries and solar cells

This remarkable package has the ability to sense

and communicate and is self powered. A major challenge is to incorporate all

these functions while maintaining very low power consumption.

Operation Of The Mote

The Smart Dust mote is run by a microcontroller

that not only determines the tasks performed by the mote, but controls power to

the various components of the system to conserve energy. Periodically the

microcontroller gets a reading from one of the sensors, which measure one of a

number of physical or chemical stimuli such as temperature, ambient light,

vibration, acceleration, or air pressure, processes the data, and stores it in

memory. It also occasionally turns on the optical receiver to see if anyone is

trying to communicate with it. This communication may include new programs or

messages from other motes. In response to a message or upon its own initiative

the microcontroller will use the corner cube retro reflector or laser to transmit

sensor data or a message to a base station or another mote.

The primary constraint in the design of the Smart

Dust motes is volume, which in turn puts a severe constraint on energy since we

do not have much room for batteries or large solar cells. Thus, the motes must

operate efficiently and conserve energy whenever possible. Most of the time,

the majority of the mote is powered off with only a clock and a few timers

running. When a timer expires, it powers up a part of the mote to carry out a

job, then powers off. A few of the timers control the sensors that measure one

of a number of physical or chemical stimuli such as temperature, ambient light,

vibration, acceleration, or air pressure. When one of these timers expires, it

powers up the corresponding sensor, takes a sample, and converts it to a

digital word. If the data is interesting, it may either be stored directly in

the SRAM or the microcontroller is powered up to perform more complex

operations with it. When this task is complete, everything is again powered

down and the timer begins counting again.

Communicating From A Grain Of Sand

Smart Dust’s full potential can only be attained

when the sensor nodes communicate with one another or with a central base

station. Wireless communication facilitates simultaneous data collection from

thousands of sensors. There are several options for communicating to and from a

cubic-millimeter computer.

Radio-frequency and optical communications each

have their strengths and weaknesses. Radio-frequency communication is well

under-stood, but currently requires minimum power levels in the multiple

milliwatt range due to analog mixers, filters, and oscillators. If whisker-thin

antennas of centimeter length can be accepted as a part of a dust mote, then

reasonably efficient antennas can be made for radio-frequency communication.

While the smallest complete radios are still on the order of a few hundred

cubic millimeters, there is active work in the industry to produce

cubic-millimeter radios.

Moreover RF techniques cannot be used because of

the following disadvantages: -

1. Dust motes offer very limited space for

antennas, thereby demanding extremely short wavelength (high frequency

transmission). Communication in this regime is not currently compatible with

low power operation of the smart dust.

2. Furthermore radio transceivers are relatively

complex circuits making it difficult to reduce their power consumption to

required microwatt levels.

3. They require modulation, band pass filtering

and demodulation circuitry.

Corner Cube Retroreflector

These MEMS structure makes it possible for dust

motes to use passive optical transmission techniques ie, to transmit modulated

optical signals without supplying any optical power. It comprises of three mutually perpendicular

mirrors of gold-coated polysilicon. The CCR has the property that any incident

ray of light is reflected back to the source (provided that it is incident

within a certain range of angles centered about the cube’s body diagonal).If

one of the mirrors is misaligned , this retro reflection property is spoiled.

The micro fabricated CCR contains an electrostatic actuator that can deflect

one of the mirrors at kilohertz rate. It has been demonstrated that a CCR

illuminated by an external light source can transmit back a modulated signal at

kilobits per second. Since the dust mote itself does not emit light , passive

transmitter consumes little power. Using a microfabricated CCR, data

transmission at a bit rate upto 1 kilobit per second and upto a range of 150 mts

,using a 5 milliwattt illuminating laser is possible.

It should be emphasized that CCR based passive

optical links require an uninterrupted line of sight. The CCR based transmitter

is highly directional. A CCR can transmit to the BTS only when the CCR body

diagonal happens to point directly towards the BTS, within a few tens of

degrees. A passive transmitter can be made more omnidirectional by employing

several CCRs, oriented in different directions, at the expense of increased

dust mote size.

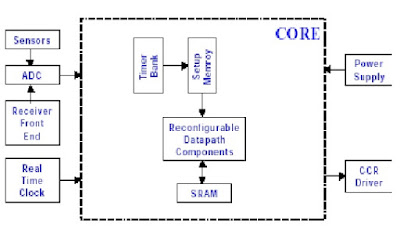

Core Functionality Specification

Choose the case of military base monitoring

wherein on the order of a thousand Smart Dust motes are deployed outside a base

by a micro air vehicle to monitor vehicle movement. The motes can be used to

determine when vehicles were moving, what type of vehicle it was, and possibly

how fast it was travelling. The motes may contain sensors for vibration, sound,

light, IR, temperature, and magnetization. CCRs will be used for transmission,

so communication will only be between a base station and the motes, not between

motes. A typical operation for this scenario would be to acquire data, store it

for a day or two, then upload the data after being interrogated with a laser.

However, to really see what functionality the architecture needed to provide

and how much reconfigurability would be necessary, an exhaustive list of the

potential activities in this scenario was made. The operations that the mote

must perform can be broken down into two categories: those that provoke an

immediate action and those that reconfigure the mote to affect future behavior.

Summary

Smart dust is made up of thousands of

sand-grain-sized sensors that can measure ambient light and temperature. The

sensors -- each one is called a "mote" -- have wireless communications

devices attached to them, and if you put a bunch of them near each other,

they'll network themselves automatically.

Smart Fabrics Electronics Seminar Topics

Introduction

Today,

the interaction of human individuals with electronic devices demands specific

user skills. In future, improved user interfaces can largely alleviate this

problem and push the exploitation of microelectronics considerably. In this context

the concept of smart clothes promises greater user-friendliness, user

empowerment, and more efficient services support. Wearable electronics responds

to the acting individual in a more or less invisible way. It serves individual

needs and thus makes life much easier. We believe that today, the cost level of

important microelectronic functions is sufficiently low and enabling key

technologies are mature enough to exploit this vision to the benefit of

society. In the following, we present various technology components to enable

the integration of electronics into textiles.

Advances

in textile technology, computer engineering, and materials science are

promoting a new breed of functional fabrics. Fashion designers are adding

wires, circuits, and optical fibers to traditional textiles, creating garments

that glow in the dark or keep the wearer warm. Meanwhile, electronics engineers

are sewing conductive threads and sensors into body suits that map users'

whereabouts and respond to environmental stimuli. Researchers agree that the

development of genuinely interactive electronic textiles is technically

possible, and that challenges in scaling up the handmade garments will

eventually be overcome. Now they must determine how best to use the technology.

Electronic

textiles (e-textiles) are fabrics that have electronics and interconnections

woven into them. Components and interconnections are a part of the fabric and

thus are much less visible and, more importantly, not susceptible to becoming

tangled together or snagged by the surroundings. Consequently, e-textiles can

be worn in everyday situations where currently available wearable computers

would hinder the user. E-textiles also have greater flexibility in adapting to

changes in the computational and sensing requirements of an application. The

number and location of sensor and processing elements can be dynamically

tailored to the current needs of the user and application, rather than being

fixed at design time. As the number of pocket electronic products (mobile

phone, palm-top computer, personal hi-fi, etc.) is increasing, it makes sense

to focus on wearable electronics, and start integrating today's products into

our clothes. The merging of advanced electronics and special textiles has

already begun. Wearable computers can now merge seamlessly into ordinary

clothing. Using various conductive textiles, data and power distribution as

well as sensing circuitry can be incorporated directly into wash-and-wear

clothing.

Wireless World

Whatever

the technical obstacles, researchers involved in the development of interactive

electronic clothing appear universally confident that context-aware coats and

sensory shirts are only a matter of time. Susan Zevin, acting director of the

Information Technology Laboratory at the US National Institute of Standards and

Technology (NIST), would like to see finished garments fitted with some form of

data encryption system before they reach consumers. After all, wearing a jacket

that is monitoring your every movement, recording details about your personal

well-being, or pinpointing your exact location at a moment in time, adds a

whole new dimension to issues of wireless security and personal privacy.

"The

challenge, I think, for industry is to build in the security and privacy before

the technology is deployed, so the user doesn't have to worry about having his

or her T-shirt attacked by a hacker, for example," says Zevin.

"People don't want to have to upload and download intrusion detection

systems themselves. Pervasive computing should also mean pervasive computer

security, and it should also mean pervasive standards and protocols for

privacy." She notes that the level of security required for electronic

textile garments will vary according to their applications.

Project Examples

Wearable Antennas

In

this program for the US Army, Foster-Miller integrated data and communications

antennas into a soldier uniform, maintaining full antenna performance, together

with the same ergonomic functionality and weight of an existing uniform. We

determined that a loop-type antenna would be the best choice for clothing

integration without interfering in or losing function during operations, and

then chose suitable body placement for antennas. With Foster-Miller's extensive

experience in electro-textile fabrication, we built embedded antenna prototypes

and evaluated loop antenna designs. The program established feasibility of the

concept and revealed specific loop antenna design tradeoffs necessary for field

implementation.

This

program provided one of the key foundations for Foster-Miller's participation

in the Objective Force Warrior program, aimed at developing soldier ensemble of

the future, which will monitor individual health, transmit and receive

mission-critical information, protect against numerous weapons, all while being

robust and comfortable.

Limitations and Issues of the "Smart

Shirt"

Some

of the wireless technology needed to support the monitoring capabilities of the

"Smart Shirt" is not completely reliable. The "Smart Shirt"

system uses Bluetooth and WLAN. Both of these technologies are in their

formative stages and it will take some time before they become dependable and

widespread.

Additionally,

the technology seems to hold the greatest promise for medical monitoring.

However, the "Smart Shirt" at this stage of development only detects

and alerts medical professionals of irregularities in patients' vital

statistics or emergency situations. It does not yet respond to dangerous health

conditions. Therefore, it will not be helpful to patients if they do face

complications after surgery and they are far away from medical care, since the

technology cannot yet fix or address these problems independently, without the

presence of a physician. Future research in this area of responsiveness is

ongoing.

Fabric Computing Devices

Designing

with unusual materials can create new user attitudes towards computing devices.

Fabric has many physical properties that make it an unexpected physical,

interface for technology. It feels soft to the touch, and is made to be worn

against the body in the most intimate of ways. Materially, it is both strong

and flexible, allowing it to create malleable and durable sensing devices.

Constructing computers and computational devices from fabric also suggests new

forms for existing computer peripherals, like keyboards, and new types of

computing devices, like jackets and hats.

Monday, November 28

3D Printing : Seminar Report|PPT|PDF|DOC|Presentation|Free Download

3D

printing is a form of additive manufacturing technology where a three

dimensional object is created by laying down successive layers of material. It

is also known as rapid prototyping, is a mechanized method whereby 3D objects

are quickly made on a reasonably sized machine connected to a computer

containing blueprints for the object.

Stereo Lithography 3D Printers

Stereo

lithographic 3D printers (known as SLAs or stereo lithography apparatus)

position a perforated platform just below the surface of a vat of liquid photo

curable polymer. A UV laser beam then traces the first slice of an object on

the surface of this liquid, causing a very thin layer of photopolymer to

harden. The perforated platform is then lowered very slightly and another slice

is traced out and hardened by the laser. Another slice is then created, and

then another, until a complete object has been printed and can be removed from

the vat of photopolymer, drained of excess liquid, and cured.

Inkjet 3D Printing

It

creates the model one layer at a time by spreading a layer of powder and inkjet

printing binder in the cross-section of the part. It is the most widely used

3-D Printing technology these days and the reasons beyond that are stated

below.

This technology is the only one that

1)

Allows for the printing of full color prototypes.

2) Unlike stereo lithography, inkjet 3D

printing is optimized for speed, low cost, and ease-of-use.

3) No toxic chemicals like those used in

stereo lithography are required.

4) Minimal post printing finish work is

needed; one needs only to use the printer itself to blow off surrounding powder

after the printing process.

5)

Allows overhangs and excess powder can be easily removed with an air blower.

System Overview

Our

3D printing process is automatic, and thus easy for any user. Still, a lot is

taking place under the hood. This section provides an overview of the ZPrinter

system and the steps involved in printing a 3D physical model. We will refer to

the 3D printer diagram in Figure 2 as we detail the 3D printing process

1)

Automatic air filter: ensures that all powder stays within the confines of the

machine, emitting only clean air into the office or workroom environment.

2)

Binder cartridge: contains the water-based adhesive that solidifies the powder.

3) Build chamber: the area where the part is

produced.

4)

Carriage: slides along the gantry to position the print heads.

5)

Compressor: generates compressed air to depowder finished parts.

Ease Of Use

Our

vision of making on-demand prototyping accessible to everyone requires that

printing a model be almost as easy as printing a document. We envisioned that

every designer, engineer, intern or student should be able to ZPrint a

prototype. And like a document printer, a 3D printer should be perfectly

compatible with a professional office environment.

To

achieve these goals, the ZPrinter automates operation at nearly every step.

This includes setup, powder loading, self-monitoring of materials and print

status, printing, and removal and recycling of loose powder. The ZPrinter is

quiet, produces zero liquid waste and employs negative pressure in a

closed-loop system to contain airborne particles. Powder and binder cartridges

ensure clean loading of build materials. Plus, an integrated fine-powder

removal chamber reduces the footprint of the system. All of these advances mean

that no special training is required, and the “hands on” time for operating the

3D printer is just a few minutes.

You

control the ZPrinter from either the desktop or the printer. ZPrint software

lets you monitor powder, binder, and ink levels from your desktop, and remotely

read the machine’s LCD display. The on-board printer display and intuitive

interface enables you to perform most operations at the machine. Plus, the ZPrinter

runs unattended during the printing process, requiring user interaction only

for setup and part removal.

Applications

1)

Education

2)

Healthcare

Rapidly

produce 3D models to reduce operating time, enhance patient and physician

communications, and improve patient outcomes.